AI Security Risks: What DevSecOps Teams Must Know to Secure AI Systems

AI security risks are no longer limited to model behavior or data privacy. Today, they also affect the way software is written, reviewed, built, and shipped. As AI coding tools, agentic AI systems, and AI-powered workflows enter the SDLC, DevSecOps teams face a new kind of risk: faster code, faster automation, and faster mistakes.

However, this does not mean teams should slow down AI adoption. Instead, they need security controls that match the speed of AI-assisted development. In this guide, we explain the most important AI security risks, how they appear in real engineering workflows, and how teams can reduce exposure across code, dependencies, Secretos, pipelines, and agents.

For a broader overview of how AI changes the threat landscape, see our guide to Ciberseguridad de IA.

What Are AI Security Risks?

AI security risks are weaknesses, threats, or failure modes that appear when artificial intelligence is designed, trained, integrated, or used inside real systems. These risks can affect models, data, prompts, APIs, code, pipelines, and the tools that connect them.

El NCSC guidance on AI and cyber security explains that cyber security is a core requirement for safe and reliable AI systems. Similarly, the Marco de gestión de riesgos de IA del NIST gives organizations a structure to manage AI risk through governance, measurement, and practical controls.

For DevSecOps teams, the problem is more specific. AI is now part of the software delivery chain. It writes code, suggests dependencies, generates configuration, calls APIs, and sometimes acts autonomously. As a result, AI security risks must be handled inside the SDLC, not only at the model layer.

Why AI Security Risks Are Different Now

Traditional cybersecurity risks usually come from human-written code, vulnerable packages, weak credentials, or misconfigured infrastructure. Those risks still exist. However, AI changes how quickly they appear and how hard they are to detect.

AI-generated code may look correct but still miss authorization checks. An AI coding assistant may suggest a vulnerable package. An agentic workflow may call the wrong tool, access the wrong file, or expose a Secreto in a log. In addition, AI systems often depend on context, prompts, connectors, and external tools, which creates more places where security can fail.

El Los 10 mejores candidatos para el Máster en Derecho de OWASP highlights risks such as prompt injection, sensitive information disclosure, supply chain issues, and excessive agency. These categories are useful because they connect AI behavior to real application security problems.

In other words, AI security risks are not only about the model. They are about the full system around the model.

Core AI Security Risks for DevSecOps Teams

Below are the risks that matter most when AI is used inside development, AppSec, and CI/CD flujos de trabajo.

1. AI-Generated Code Vulnerabilities

AI coding tools can generate code that works but is not safe. For example, they may create SQL queries without proper parameterization, skip input validation, or implement weak authentication logic.

This happens because many AI systems generate likely code patterns based on training data. However, likely code is not always secure code. In practice, the model may reproduce insecure examples because they are common across public repositories.

Los ejemplos más comunes incluyen:

- inyección SQL

- Cross-site scripting

- Missing authorization checks

- Weak session handling

- Unsafe deserialization

- Missing CSRF protection

Therefore, AI-generated code should be treated as untrusted until it passes SAST, policy checks, and review.

Internal link suggestion: connect this section to your post on AI SAST.

2. Supply Chain and Dependency Risks

AI tools do not only generate code. They also suggest packages, versions, scripts, and installation commands. This creates a direct path from AI recommendations to software supply chain risk.

For example, an AI tool may suggest:

- An outdated package

- A typosquatted dependency

- A hallucinated package name

- A package with suspicious install scripts

- A library that is vulnerable but still widely used

Moreover, attackers can exploit this behavior by registering package names that AI tools are likely to invent. This risk is often called slopsquatting. It turns model hallucination into a package supply chain attack.

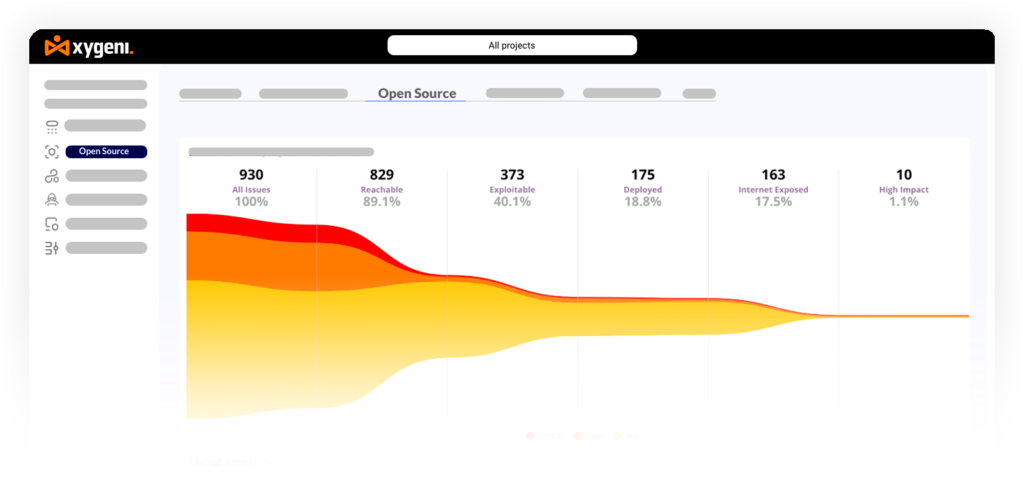

To reduce this risk, teams need SCA, malware detection, dependency policy enforcement, and reachability analysis. They should also use exploitability signals such as EPS and active exploitation intelligence from the CISCatálogo de vulnerabilidades explotadas conocidas.

3. Secretos Exposure in AI Workflows

Secretos exposure is one of the most practical AI security risks. Developers often paste context into AI tools. That context may include API keys, tokens, credentials, URLs, or internal configuration.

In addition, AI-generated code may include placeholders that look real, or worse, copy Secretos back into source files, pipeline scripts, or logs. Once Secretos enter Git history or CI/CD logs, they can remain exploitable long after the original commit.

Common exposure points include:

- Historial de avisos

- Código generado

- Git commits

- CI/CD los registros

- IaC archivos

- Imágenes de contenedores

- Espacios de trabajo compartidos

For this reason, teams should combine IDE-level scanning, pre-commit checks, repository history escaneos, CI/CD log scanning, and automatic revocation.

Internal link suggestion: connect this section to your Protección de secretos product or related content.

4. AI Agent and Tool Misuse

IA agente introduces a new layer of risk because agents do not only suggest actions. They can take actions.

An AI agent may run shell commands, edit files, call APIs, open pull requests, modify CI workflows, or interact with cloud services. Although this creates huge productivity gains, it also increases the blast radius of mistakes.

Los riesgos clave incluyen:

- Unsafe shell execution

- Over-permissioned API keys

- Unauthorized code changes

- MCP or API connector misconfiguration

- Tool calls outside approved scope

- Environment access beyond what the task requires

The OWASP LLM Top 10 category for excessive agency is especially relevant here. If an agent has too much access, a bad instruction, prompt injection, or compromised tool can turn into a real security event.

5. CI/CD y Pipeline Riesgos

AI-generated code eventually reaches the pipeline. At that point, risk moves from source code into builds, artifacts, Secretos, dependencies, and deployment workflows.

For example, an AI-assisted change may:

- Add an unsafe build step

- Modify a GitHub Actions workflow

- Pull a malicious package during install

- Print Secretos into build logs

- Disable a security control

- Change deployment logic

Consequently, Seguridad en CI/CD becomes essential for AI adoption. Pipeline guardrails should block unsafe patterns before they reach production. For deeper context, see our content on Seguridad en CI/CD y software supply chain security.

6. Data Leakage and Prompt Injection

Prompt injection is one of the best-known AI security risks, but it is often misunderstood. It is not only a chatbot problem. It can affect any AI workflow that accepts external input and then uses that input to guide actions.

For example, a malicious issue description, README file, support ticket, or dependency documentation page can include hidden instructions. If an AI agent reads that content and follows it, the attacker may influence tool calls, code changes, or data access.

Data leakage can happen in similar ways. The model may reveal sensitive context, summarize private files, or send confidential data to external services. Therefore, AI systems need prompt filtering, output controls, tool restrictions, and clear boundaries around what data they can access.

AI Security Risks Across the SDLC

AI security risks appear at different stages of the software lifecycle. The key is to secure each stage, not just the final application.

| SDLC Fase | AI Security Risk | Ejemplo | Control recomendado |

|---|---|---|---|

| IDE | Unsafe AI-generated code | An AI coding assistant suggests insecure authentication logic. | Gestión del riesgo SAST and secure coding feedback. |

| Commit | Exposición de secretos | A token appears in generated code or commit historia. | Secretos detection, pre-commit checks, and auto-revocation. |

| Pull Request | Policy bypass | Generated code changes access control rules without review. | PR guardrails and policy enforcement. |

| Configurar | Malicious dependency | An AI-suggested package includes suspicious install behavior. | SCA, malware detection, and dependency policy checks. |

| CI/CD | Pipeline manipulación | An agent modifies workflow files or deployment scripts. | Seguridad en CI/CD checks and anomaly detection. |

| Runtime | Prompt injection or data leakage | External input causes an AI workflow to reveal sensitive context. | Prompt controls, access restrictions, and monitoring. |

AI Security Risks vs Traditional Cybersecurity Risks

Traditional cybersecurity still matters. However, AI adds new behavior patterns that require different controls.

| Área | Traditional Cybersecurity Risk | AI Security Risk |

|---|---|---|

| Código | Human-written vulnerabilities. | AI-generated insecure patterns at higher speed. |

| Dependencias | Known vulnerable packages. | Hallucinated, malicious, or unsafe AI-suggested packages. |

| Secretos | Credentials accidentally committed by developers. | Secretos copied into prompts, generated code, or logs. |

| Accesorios | Manual misuse of developer tools. | Autonomous agents misusing tools or APIs. |

| Pipelines | Desconfigurado CI/CD flujos de trabajo. | Agent-generated workflow changes or unsafe automation. |

Real-World AI Security Risk Examples

AI security risk is not theoretical. Several public frameworks and research efforts now track these issues more formally.

El MIT AI Risk Repository catalogs more than 1,700 AI risks across different causes and domains. Meanwhile, OWASP provides practical categories for LLM application risks, including prompt injection, sensitive information disclosure, supply chain vulnerabilities, and excessive agency.

For DevSecOps teams, the most relevant examples often appear in software delivery:

- AI tools suggesting vulnerable code

- AI agents modifying workflow files

- AI-generated dependencies introducing supply chain exposure

- Secretos leaking through prompts, logs, or commits

- Agentic workflows calling tools outside approved scope

In short, AI security risks become much more serious when AI systems can touch code, credentials, packages, pipelines, or infrastructure.

How to Mitigate AI Security Risks in Practice

The best way to reduce AI security risks is to treat AI-assisted development as part of the SDLC. That means scanning early, validating often, and enforcing policies where developers actually work.

1. Scan AI-Generated Code in the IDE

Developers should see security feedback while they are writing or accepting AI-generated code. This reduces context switching and helps fix issues before they reach Git.

Uso:

- SAST in the IDE

- Inline vulnerability explanations

- Secure fix suggestions

- Policy-aware remediation

This is especially important for AI coding assistants, where unsafe suggestions can enter the codebase quickly.

2. Validate Dependencies Before Build

AI-suggested dependencies must be verified before they are installed or shipped. Therefore, teams should enforce dependency controls during development and CI/CD.

Uso:

- SCA

- Detección de malware

- Typosquatting detection

- Puntuación EPSS

- Análisis de accesibilidad

- Policy-based blocking

This helps prioritize the packages that represent real risk, not just theoretical exposure.

3. Detect and Revoke Secretos Automatically

Secretos scanning must cover more than source code. AI-assisted workflows can expose credentials in many places.

Uso:

- Pre-commit exploración

- Repository history scanning

- Pipeline escaneo de registros

- IaC exploración

- Escaneo de imágenes de contenedores

- Revocación automatizada

As a result, teams reduce the time between exposure and containment.

4. Hacer cumplir Guardrails in CI/CD

Guardrails should decide whether a change is safe enough to proceed. Reporting is useful, but blocking is necessary for critical risk.

Guardrails debe cubrir:

- New critical vulnerabilities

- Secretos

- Dependencias maliciosas

- Unpinned or untrusted packages

- Unsafe workflow changes

- Desaparecido SBOMs

- Violaciones de la política

In addition, teams should start with report-only mode when needed, then move toward blocking as confidence grows.

5. Monitor Agentic Tool Behavior

Agentic AI systems need observability. If an agent can edit files, trigger builds, or call APIs, teams need to know what it did, when it did it, and whether the action was expected.

Monitor:

- Llamadas de herramientas

- Workflow file changes

- Repository write activity

- Network destinations

- Secretos access

- Pull request creación

- Pipeline dispara

Without this visibility, agent autonomy becomes hard to trust.

Where Xygeni Helps Reduce AI Security Risks

Xygeni focuses on securing AI-assisted development across the full software delivery chain. Rather than treating AI risk as a separate category, it connects code, dependencies, Secretos, pipelines, and business context.

Por ejemplo:

- SAST helps detect insecure AI-generated code early.

- SCA validates dependencies and detects malicious packages.

- Protección de secretos detects exposed credentials across repositories and pipelines.

- Seguridad en CI/CD enforces policies before unsafe changes move forward.

- Anomaly Detection identifies unusual behavior in development and delivery workflows.

- ASPM correlates findings into one risk view so teams can prioritize what matters.

This matters because AI security risks are cross-layer by nature. A vulnerable dependency, exposed token, and unsafe workflow change may look separate in point tools. However, together they can represent a much larger attack path.

AI Security Risk Management Frameworks to Know

Several frameworks help teams structure their work.

El Marco de gestión de riesgos de IA del NIST helps organizations map, measure, manage, and govern AI risks. It is useful for leadership, compliance, and risk programs.

El Los 10 mejores candidatos para el Máster en Derecho de OWASP is more practical for AppSec teams because it maps directly to technical risks such as prompt injection, sensitive data exposure, supply chain vulnerabilities, and excessive agency.

El NCSC AI and cyber security guidance is useful for security leaders who need to understand how AI changes organizational cyber risk.

Together, these resources show one clear point: AI security must be managed across people, processes, systems, and software delivery workflows.

Checklist: How to Reduce AI Security Risks

Use this checklist as a practical starting point.

| Área de control | Qué hacer | Por qué es Importante |

|---|---|---|

| Código generado por IA | Ejecutar SAST in the IDE, PR, and CI/CD pipeline. | Prevents insecure code from reaching production. |

| Dependencias | Usa SCA, malware detection, EPSS, and reachability. | Blocks risky AI-suggested packages. |

| Secretos | Escanear commits, logs, history, IaCy contenedores. | Reduces credential exposure and misuse. |

| CI/CD | Hacer cumplir pipeline guardrails and policy gates. | Stops unsafe builds and deployments. |

| Herramientas de agente | Monitor tool calls, API access, and workflow changes. | Limits excessive agency and unexpected behavior. |

| Gestión del riesgo | Usa ASPM to correlate findings across layers. | Helps teams focus on real business risk. |

Puntos Clave

- AI security risks now affect code, dependencies, Secretos, pipelines, and agents.

- Traditional AppSec tools are still needed, but they must run earlier and with more context.

- AI-generated code should be treated as untrusted until validated.

- AI agent workflows need guardrails, permissions, and observability.

- DevSecOps teams need unified visibility across the SDLC to manage AI risk effectively.

FAQ: AI Security Risks

What are AI security risks?

AI security risks are threats or weaknesses that appear when AI systems are built, integrated, or used. They can affect models, data, prompts, code, dependencies, APIs, and pipelines.

What are the biggest AI security risks for DevSecOps teams?

The biggest risks include insecure AI-generated code, vulnerable dependencies, Secretos exposure, prompt injection, excessive agent permissions, and unsafe CI/CD automatización.

Why are AI security risks different from traditional cybersecurity risks?

AI systems can generate code, suggest dependencies, call tools, and act autonomously. As a result, risks appear faster and across more layers of the SDLC.

How can teams reduce AI security risks?

Teams can reduce risk by scanning AI-generated code, validating dependencies, detecting Secretos, enforcing CI/CD guardrails, monitoring agent behavior, and correlating findings through ASPM.

Is AI-generated code safe?

AI-generated code is not safe by default. It should be reviewed, scanned, tested, and validated before it reaches production.

Final Thoughts: AI Security Risks Need SDLC-Level Controls

AI changes the speed and shape of software risk. It helps teams build faster, but it also introduces new ways for insecure code, exposed Secretos, unsafe dependencies, and risky automation to enter the delivery chain.

Therefore, AI security cannot be handled only with model governance or policy documents. It needs practical controls inside the SDLC: IDE feedback, SAST, SCADetección de secretos CI/CD guardrails, detección de anomalías y ASPM-level correlation.

The teams that manage AI security risks well will not be the ones that block AI adoption. They will be the ones that build the right safety layer around it.