Top 6 SAST Tools for 2026: Compared by Accuracy, AI Remediation, and Real-World Coverage

Static Application Security Testing is one of the most widely adopted practices in DevSecOps, but adoption does not guarantee effectiveness. In 2026, over 52,000 new CVEs were reported, and 72% of security breaches traced back to exploitable software vulnerabilities. The difference between SAST tools is not whether they scan your code: it is whether they find real vulnerabilities accurately, reduce the noise that overwhelms security teams, and help developers fix issues without slowing delivery. This guide compares the top 6 SAST tools using objective benchmark data from the OWASP Benchmark Project, covering detection accuracy, false positive rates, AI remediation capability, malware detection, and CI/CD integration.

Top 6 SAST Tools in 2026

| Tool | True Positive Rate | False Positive Rate | AI AutoFix | Malware Detection | Best For |

|---|---|---|---|---|---|

| Xygeni | 100% | 16.7% | Yes, context-aware with Remediation Risk | Yes, behavior-based | Teams needing accuracy, AI remediation, and supply chain protection |

| Snyk Code | 97.18% | 34.55% | Partial, requires manual review | No | Developer-first teams already in the Snyk ecosystem |

| Semgrep | 87.06% | 42.09% | Rule-based, requires tuning | No | Teams wanting customizable open-source scanning |

| SonarQube | 50.36% | Not published | AI CodeFix for quality issues | No | Code quality-focused teams with basic security needs |

| CodeQL | Not published | Not published | No | No | Security researchers and advanced audit workflows |

| Mend SAST | Not published | Not published | Yes, dual-phase AI scanning | No | Mid-to-large teams wanting unified AppSec platform |

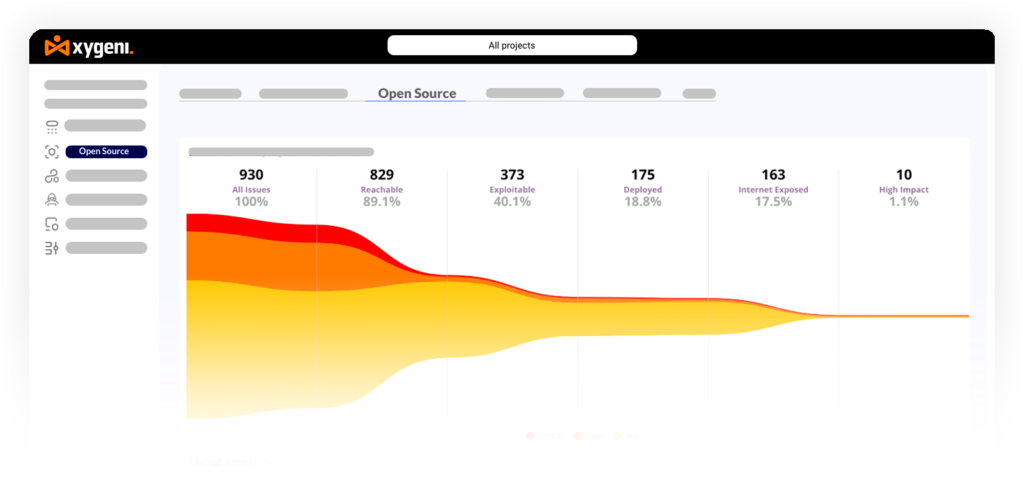

Overview: Xygeni SAST is a modern static code analysis tool built for DevSecOps teams that need high detection accuracy, low false positive noise, and AI-powered remediation in a single workflow. Unlike traditional SAST tools that stop at flagging vulnerabilities, Xygeni combines static analysis with malware detection, AI AutoFix, and Remediation Risk analysis to close the loop between finding and fixing without breaking builds or slowing delivery.

According to the OWASP Benchmark Project, Xygeni SAST achieves a 100% True Positive Rate with a 16.7% False Positive Rate, outperforming all other tools in this comparison on both detection accuracy and noise reduction. It reaches 100% accuracy on SQL Injection (CWE-89) and Cross-Site Scripting (CWE-79), with zero false positives on Weak Encryption (CWE-327) and Weak Hashing (CWE-328).

This level of precision matters because alert fatigue is one of the leading reasons security findings go unresolved. When developers trust that flagged issues are real, remediation rates improve significantly. You can read more about how to reduce AppSec alert fatigue and AI SAST for both human and AI-generated code for additional context.

Key Features:

- 100% True Positive Rate and 16.7% False Positive Rate on OWASP Benchmark, the strongest accuracy profile in this comparison

- AI AutoFix with Remediation Risk analysis: generates secure, context-aware code fixes directly in the IDE or CI pipeline, validated for safety and breaking-change impact before application. Reduces remediation effort by up to 80% according to Xygeni’s own measurement data

- Malware detection: inspects proprietary code for malware signatures, obfuscated logic, and suspicious patterns aligned with CWE-506 (Embedded Malicious Code) and other stealth threats, catching backdoors and trojans before they reach production

- Security Guardrails: enforces policies that block risky patterns and dangerous code from merging into the main branch, keeping developer workflows uninterrupted while preventing unsafe code from progressing

- Agentic AI through DevAI: continuous incremental scanning inside the IDE as developers write code, with exploit path analysis and policy-driven guardrails enforced through the MCP Server

- IDE integration: scan directly in the editor, review vulnerability metadata, and apply fixes without leaving the development environment

- Native CI/CD integration with GitHub Actions, GitLab CI, Jenkins, Bitbucket Pipelines, and Azure DevOps

- Custom rule support with full visibility into detection logic, no black-box engine

- Risk-based prioritization combining exploitability, reachability, and business context to surface what truly matters

- Part of a unified AppSec platform covering SAST, SCA, DAST, IaC, Secrets, CI/CD Security, and ASPM

Best for: DevSecOps teams that need the highest detection accuracy available, AI-powered remediation that is safe to apply, and supply chain protection that goes beyond CVE scanning.

Pricing: Starts at $33/month for the complete all-in-one platform. Includes SAST, SCA, CI/CD Security, Secrets Detection, IaC Security, and Container Scanning. Unlimited repositories and contributors with no per-seat pricing.

Reviews:

The visibility of our open-source supply chain dependencies and real-time detection of vulnerabilities have been invaluable.

2. Snyk Sast Tool

Overview: Snyk Code is a developer-friendly static code analysis tool built for speed and simplicity. It integrates directly into IDEs, Git workflows, and CI/CD pipelines, making it easy for teams already using other Snyk products to extend coverage to source code. It recently introduced an AI-powered AutoFix feature that suggests code fixes for common vulnerability patterns, though accuracy and context-awareness vary by language and framework, and manual review is often needed before applying changes.

According to OWASP Benchmark data, Snyk Code achieves a 97.18% True Positive Rate but carries a 34.55% False Positive Rate, meaning roughly one in three flagged issues is a false alarm. For teams without dedicated security triage capacity, this noise level can slow down developer workflows and reduce trust in findings over time.

Key Features:

- 97.18% True Positive Rate on OWASP Benchmark

- IDE and CI/CD integration for real-time static code feedback inside developer environments

- AI AutoFix suggestions for common vulnerability patterns, with manual review required for safety

- Continuous monitoring for newly disclosed vulnerabilities across scanned projects

- License compliance and policy enforcement available through separate Snyk plan modules

Cons:

- 34.55% False Positive Rate produces significant noise, increasing triage burden for security and development teams

- No malware detection or supply chain threat protection against typosquatting or dependency confusion

- AI-generated fixes are not always tailored to specific code context and require developer validation

- Full security coverage requires purchasing SCA, IaC, Secrets, and Container scanning as separate plan modules

Pricing: Starts at $125/month for a minimum of 5 contributors, covering SAST only. Additional features are sold separately. Enterprise plans required for teams over 10 contributors.

Reviews:

“It would be helpful if we get a recommendation while doing the scan about the necessary things we need to implement after identifying the vulnerabilities.”

“Provides clear information and is easy to follow with good feedback regarding code practices. “

3. Semgrep Sast Tool

Overview: Semgrep is an open-source, rule-based static code analysis tool built for speed, customization, and multi-language support. It runs fast without requiring compilation and allows security teams to write precise detection rules tailored to their codebase. It supports basic AutoFix through custom fix: rules and AI-assisted suggestions via Semgrep Assistant, though both require tuning and manual review before applying changes in production.

OWASP Benchmark data shows Semgrep achieves an 87.06% True Positive Rate with a 42.09% False Positive Rate, the highest false positive rate of the tools in this comparison with published data. This means that without significant custom rule tuning, teams will spend substantial time triaging non-issues. For context on static vs dynamic analysis approaches, Semgrep’s rule-based model gives it precision where rules are well-written and blind spots where they are not.

Key Features:

- Custom security rule engine supporting precise, codebase-specific detection

- Fast scanning without compilation, with broad multi-language support

- Rule-based AutoFix via

--autofixflag and AI-assisted suggestions through Semgrep Assistant - Open source core with a commercial tier for advanced features

- SARIF output and CI/CD integration for pipeline embedding

Cons:

- 87.06% True Positive Rate and 42.09% False Positive Rate on OWASP Benchmark, requiring tuning to reduce noise

- No malware detection or protection against supply chain attacks

- Custom rule maintenance requires ongoing security team investment to stay effective

- Reachability analysis limited to a subset of supported languages

Pricing: Starts at $100/month per contributor for Code, Supply Chain, and Secrets combined. All product licenses must be purchased in equal quantities, no partial coverage options.

Reviews:

“There should be more information on how to acquire the system, catering to beginners in application security, to make it more user-friendly.”

4. SonarQube SAST Tool

Overview: SonarQube is widely adopted for enforcing code quality and maintainability standards, with static analysis security capabilities built on top of that foundation. It detects security hotspots and common vulnerabilities while promoting clean coding practices. It has introduced AI CodeFix suggestions for select issues, though these focus primarily on maintainability rather than critical security vulnerabilities and still require developer validation.

OWASP Benchmark data shows SonarQube achieves a 50.36% True Positive Rate, the lowest of the tools with published benchmark data in this comparison. For teams where the primary goal is code quality with basic security visibility, it remains a well-established choice. For teams where the primary goal is security accuracy, the detection rate warrants careful consideration alongside the broader software development security best practices.

Key Features:

- Multi-language static analysis with a focus on code quality, maintainability, and security hotspots

- Quality gates that block builds when defined thresholds are exceeded

- AI CodeFix suggestions for quality and style issues, with security coverage more limited

- CI/CD integration with Jenkins, GitLab, Azure DevOps, GitHub Actions, and Bitbucket

- IDE plugins for real-time feedback during development

Cons:

- 50.36% True Positive Rate on OWASP Benchmark, meaning a significant proportion of real vulnerabilities go undetected

- No malware detection or supply chain threat visibility

- AI CodeFix focused on maintainability, not critical security remediation

- SAST-only platform; no SCA, secrets, IaC, or container security included

Pricing: Starts at $65/month for the Team Plan, covering SAST only. Pricing scales with lines of code, starting at 100K LoC and increasing by $6 per additional 10K LoC, with a hard cap at 1.9M LoC.

Reviews:

“The product provides false reports sometimes.”

“There are many options and examples available in the tool that help us fix the issues it shows us.”

5. CodeQL SAST Tool

Overview: CodeQL is a query-based static code analysis tool developed by GitHub that enables advanced, customizable vulnerability detection through its own query language. It allows security researchers and teams to write precise queries that inspect code behavior across supported languages, making it one of the most powerful tools for deep security auditing and finding complex vulnerability patterns that simpler SAST tools miss.

CodeQL does not offer AI AutoFix or remediation assistance, and all findings must be manually reviewed and addressed by developers. Its learning curve is steep: using it effectively requires specialized knowledge of the CodeQL language and security logic. It is best suited for audit-oriented workflows and security research rather than day-to-day developer-integrated scanning. For teams building on GitHub, it integrates natively through GitHub Advanced Security and GitHub Actions.

Key Features:

- Custom query-based vulnerability detection using the CodeQL query language

- Deep code behavior analysis across Java, JavaScript, Python, C/C++, C#, Go, Ruby, and Swift

- Native GitHub integration through GitHub Advanced Security

- Automated scanning on pull requests and scheduled runs via GitHub Actions

- SARIF output for integration with security dashboards and reporting tools

Cons:

- Steep learning curve requiring specialized CodeQL knowledge to write effective queries

- No AI AutoFix or remediation assistance, all fixes are manual

- No malware detection or supply chain threat visibility

- Requires GitHub Enterprise Cloud or Azure DevOps, cannot be purchased as a standalone tool

- Better suited for security audits than continuous developer-integrated security feedback

Pricing: Starts at $70/month per user, combining GitHub Advanced Security ($49/month per active committer) and GitHub Enterprise or Azure DevOps ($21/month). Cannot be purchased independently of the GitHub or Azure DevOps platform.

“GitHub Code Scanning should add more templates.”

“The solution helps identify vulnerabilities by understanding how ports communicate with applications running on a system.”

6. Mend

Overview: Mend SAST is part of Mend.io’s AI-native AppSec platform, offering a dual-phase scanning approach: a fast scan integrated into AI code generation engines for real-time feedback, and a deeper repository-level or CI pipeline scan for comprehensive coverage. It supports over 25 programming languages and correlates SAST findings with SCA, DAST, IaC, and AI component risk data in a unified dashboard, making it a strong option for mid-to-large organizations seeking a centralized AppSec platform.

Unlike some tools in this list, Mend SAST is positioned as a full platform rather than a standalone scanner, which means its value compounds when used alongside Mend’s SCA and supply chain capabilities. For teams evaluating it as a pure SAST tool, the pricing model and minimum commitment may be a barrier compared to more modular options.

Key Features:

- Dual-phase scanning: fast inline scans during AI code generation and deep scans at repository or CI level

- Support for 25+ programming languages with AI-assisted remediation

- Unified risk view correlating SAST, SCA, DAST, IaC, and AI security findings

- Policy enforcement with software supply chain risk integration

- Native CI/CD integration across major repositories and pipeline platforms

Cons:

- No malware detection; requires external tooling for supply chain threat protection

- No freemium tier, platform is designed for mid-to-large organization budgets

- Annual-only billing with no monthly plan option

Pricing: Starts at $1,000/year per developer for full platform access, including SAST, SCA, IaC, Secrets, and AI component scanning. No contributor minimums or usage caps.

Key Metrics: How to Evaluate SAST Tools

With the tools compared, these are the criteria that matter most when making an informed selection decision:

True Positive Rate. A SAST tool that misses real vulnerabilities provides a false sense of security. The OWASP Benchmark Project provides standardized TPR measurements for common vulnerability types. Xygeni achieves 100%, Snyk Code 97.18%, Semgrep 87.06%, and SonarQube 50.36%. The gap between these figures is not marginal: a 50% TPR means half of real vulnerabilities go undetected.

False Positive Rate. Alert fatigue is one of the primary reasons security findings go unresolved. When developers receive too many false alarms, they begin ignoring or dismissing findings without investigation. A low FPR is not a nice-to-have: it is the difference between a tool that gets used and one that gets turned off. Xygeni’s 16.7% FPR compares favorably to Snyk’s 34.55% and Semgrep’s 42.09%.

AI AutoFix quality. The presence of an AutoFix feature is less important than its safety and accuracy. A fix that introduces a new vulnerability or breaks the build is worse than no fix at all. Look for tools that evaluate Remediation Risk before suggesting changes, showing breaking-change impact alongside the fix itself.

Malware detection. Traditional SAST tools analyze code you write. They do not detect malicious code injected through compromised dependencies, backdoored build tools, or supply chain attacks. This is a category gap that only a handful of tools address. See how malicious code can cause damage for context on why this matters.

CI/CD integration depth. There is a difference between a tool that can be added to a pipeline and a tool with native, maintained integrations for your specific platform. Verify support for your exact CI/CD system before evaluating other features.

Coverage breadth. A SAST tool that requires four additional subscriptions to cover secrets, SCA, IaC, and containers will cost significantly more and introduce integration overhead. Platforms that consolidate coverage, like Xygeni, reduce both cost and operational complexity at scale. Compare options using the best application security tools overview for broader context.

AI AutoFix: What It Actually Means in 2026

Until recently, most SAST tools were detection-only platforms. They flagged vulnerabilities and left remediation entirely to developers. In 2026, AI-powered AutoFix has become a baseline expectation, but not all implementations are equal.

The meaningful distinction is between tools that suggest generic fixes based on pattern matching and tools that understand the full code context, validate the fix for safety, and evaluate whether the change could break existing behavior. Autofix in AppSec done well reduces mean time to remediation significantly. Done poorly, it creates new problems while appearing to solve old ones.

Xygeni’s AI AutoFix is validated through its MCP Server and Remediation Risk engine before any suggestion reaches the developer, ensuring that fixes are safe, contextually accurate, and production-ready. Snyk and Semgrep offer AutoFix capabilities that work well for common patterns but require more manual validation on complex or context-dependent issues. SonarQube’s AI CodeFix focuses primarily on maintainability rather than security remediation. CodeQL offers no AutoFix capability.

How to Choose the Right SAST Tool

If detection accuracy is the priority: Xygeni’s 100% TPR and 16.7% FPR, verified by the OWASP Benchmark, make it the strongest choice for teams where missing vulnerabilities or drowning in false positives carries real risk.

If developer adoption with minimal friction is the priority: Snyk Code offers the lowest-friction entry point for teams already in the Snyk ecosystem, with IDE integration that developers adopt quickly, at the cost of a higher false positive rate.

If customization and open source are the priority: Semgrep gives security teams full control over detection rules and runs fast without compilation. The trade-off is a higher false positive rate and the ongoing investment required to maintain effective custom rules.

If code quality is the primary goal with basic security visibility: SonarQube remains a well-established choice for enforcing code standards, with the understanding that its security detection rate is significantly lower than dedicated security-first tools.

If deep audit capability is needed: CodeQL is the most powerful tool for complex, custom vulnerability research, but requires specialized knowledge and is not suited for continuous developer-integrated workflows.

If a unified enterprise AppSec platform is the goal: Mend SAST offers the broadest platform integration for mid-to-large organizations, with a pricing model that reflects that positioning.

Final Thoughts

SAST tools vary more than their marketing suggests. The OWASP Benchmark data in this guide shows meaningful differences in detection accuracy and false positive rates that directly affect how useful a tool is in practice. A tool that detects 50% of vulnerabilities is not half as good as one that detects 100%: it means half of your real vulnerabilities remain invisible while your team spends time on alerts that may not be real.

For teams that need the highest accuracy available, AI-powered remediation that is safe to apply, and supply chain protection that goes beyond static code analysis, Xygeni SAST provides the most complete approach in 2026 as part of its unified AppSec platform.

Start your free 7-day trial of Xygeni, no credit card required.

Unmatched Detection Accuracy - 100% True Positive Rates – OWASP Benchmark Proven

FAQ

What is a SAST tool?

A SAST (Static Application Security Testing) tool analyzes source code, bytecode, or binary code for security vulnerabilities without executing the application. It identifies issues such as SQL injection, cross-site scripting, insecure configurations, and logic flaws early in the development process, before code reaches production.

What is the difference between SAST and DAST?

SAST analyzes code without running the application, catching vulnerabilities at the source code level during development. DAST (Dynamic Application Security Testing) analyzes a running application from the outside, simulating real attacks to find exploitable vulnerabilities that only appear at runtime. Both are necessary for complete application security coverage. See static analysis vs dynamic analysis for a detailed comparison.

What is the OWASP Benchmark and why does it matter for SAST tools?

The OWASP Benchmark Project is a standardized test suite that measures how accurately security tools detect real vulnerabilities versus false positives. It provides a True Positive Rate (how many real vulnerabilities are found) and a False Positive Rate (how many non-issues are incorrectly flagged) for each tool. It is one of the few objective, vendor-neutral ways to compare SAST tool accuracy across common vulnerability categories like SQL injection and XSS.

What is AI AutoFix in SAST tools?

AI AutoFix is a capability that generates secure code fixes for detected vulnerabilities, either suggesting them to developers or applying them automatically in pull requests. The quality of AutoFix implementations varies significantly: the best tools validate fixes for safety, evaluate breaking-change risk, and tailor suggestions to the specific code context. Less mature implementations offer generic pattern-based fixes that often require manual adjustment.

Which SAST tool has the highest detection accuracy?

Based on OWASP Benchmark data, Xygeni SAST achieves a 100% True Positive Rate with a 16.7% False Positive Rate, the strongest accuracy profile of the tools with published benchmark data. Snyk Code achieves 97.18% TPR with a 34.55% FPR, and Semgrep achieves 87.06% TPR with a 42.09% FPR. SonarQube achieves 50.36% TPR.