AI for coding has introduced a new wave of creativity, speed, and experimentation. One of the most talked-about trends is vibe coding, where developers rely on AI assistants like ChatGPT or Cursor to generate code based on intent rather than strict structure. While it can dramatically boost productivity, this style also brings serious concerns. In particular, vibe coding security risks and the broader spectrum of AI-generated code security risks are raising new challenges for DevOps and security teams alike.

This guide answers the 10 most important questions developers are asking about vibe coding. More importantly, it shows how to stay safe while taking full advantage of AI for coding.

1. What is vibe coding?

Vibe coding is a style where developers use AI tools to generate code by “vibe” or intuition, describing functionality instead of writing everything manually. It removes boilerplate, speeds up prototyping, and encourages experimentation.

However, this fluidity can introduce security risks that developers often overlook. Since AI for coding doesn’t inherently understand your threat model or security context, mistakes can go unnoticed.

2. Is vibe coding safe?

Not always. While the code may run correctly, it may lack critical protections. For example, you might end up with hardcoded secrets, missing input validation, or outdated dependencies. These are just a few examples of common AI-generated code security risks.

In a typical vibe coding session, these issues slip through because the AI is focused on functionality, not security. That’s why security-conscious teams treat vibe coding as a starting point, not the final implementation.

3. What are the top vibe coding security risks?

Some of the most frequent vibe coding security risks include:

- Injection flaws due to unvalidated inputs

- Use of insecure or deprecated libraries

- Lack of HTTPS enforcement

- Poor authentication and authorization logic

- Secrets committed to repositories

These issues don’t always come from negligence. Often, they’re suggested by AI tools prioritizing speed. To address them, teams should apply secure coding standards and enforce automated checks.

4. What are the dangers of AI-generated code?

The core issue with AI-generated code security risks is that AI lacks real context. It doesn’t know if it’s working on a financial app, an internal tool, or a production-grade API. As a result, it might:

- Omit error handling

- Bypass secure defaults

- Suggest overly permissive roles or scopes

- Recommend insecure configurations

Even experienced developers can overlook these red flags when they rely heavily on AI for coding without reviewing outputs thoroughly.

Additionally, as emphasized by the OWASP Top 10 for LLM Applications and detailed in the OWASP GenAI Security Solutions Reference Guide (Q2–Q3 2025), generative AI introduces new categories of risk, such as prompt injection, insecure output handling, over-trusted responses, and potential data leakage. These aren’t just theoretical. They’re real vulnerabilities that can be silently introduced into production environments if not caught early. For further insights, check out MCP Security: Protecting the Model Context Protocol and the official OWASP GenAI Security Solutions Reference Guide.

5. Can AI code compromise compliance?

Absolutely. When using AI for coding, especially in high-speed environments like vibe coding, it’s easy to overlook compliance pitfalls. AI tools may recommend third-party libraries with unclear or incompatible licenses. They can also generate data-handling patterns that unknowingly violate GDPR, HIPAA, or SOC 2 standards.

Because vibe-coded projects tend to skip manual reviews, these issues can slip through unnoticed. And once embedded in production, they can lead to regulatory penalties or customer trust loss.

That’s why real-time monitoring and policy enforcement are critical. Solutions like Xygeni’s CI/CD security guardrails provide visibility across your pipelines and dependencies. They automatically detect violations, enforce governance, and help keep your codebase compliant, even when AI is writing most of it.

6. How can developers secure AI-generated code?

To reduce AI-generated code security risks, start by assuming that AI code is untrusted by default. Then:

- Use secret scanners and dependency checkers

- Validate inputs with strict schema enforcement

- Enable linters for security best practices

- Scan IaC files and Docker configs automatically

- Review AI output in code reviews

These practices make vibe coding safer while preserving its speed and flexibility.

7. Are there tools that detect vibe coding risks?

Yes, modern AppSec platforms like Xygeni are designed to help. Xygeni integrates into your CI/CD and DevOps workflows to detect:

- Exposed secrets in AI-suggested commits

- Misconfigured infrastructure code

- Risky or unverified dependencies

- CI/CD pipeline flaws introduced by automation

With support for real-time scanning and policy enforcement, Xygeni protects against the top vibe coding security risks without slowing developers down.

8. Is AI for coding replacing secure development?

Not at all. AI for coding is a powerful assistant, but security still requires developer judgment. While AI can generate syntactically correct code, it doesn’t model threats or understand your organization’s risk tolerance.

In fact, the rise of vibe coding makes secure development practices more important than ever. Each AI-generated suggestion should be treated as untrusted until reviewed, just like third-party code.

To maintain strong security posture while embracing AI, organizations must evolve their development practices. That means blending human oversight with automated guardrails. For a deeper dive into how cybersecurity needs to evolve with AI, check out this full AI cybersecurity guide.

9. Can vibe coding work in production?

It can, but only with the right guardrails. Vibe coding works best when paired with automated controls that detect issues before they reach production. This includes:

- Static analysis for known vulnerabilities

- Dependency scanning with reachability analysis

- Enforcement of EPSS-based prioritization

- Secure defaults in your frameworks and templates

By combining these practices with regular reviews, vibe coding security risks can be reduced significantly, even in production workflows.

10. How does Xygeni make AI for coding safer?

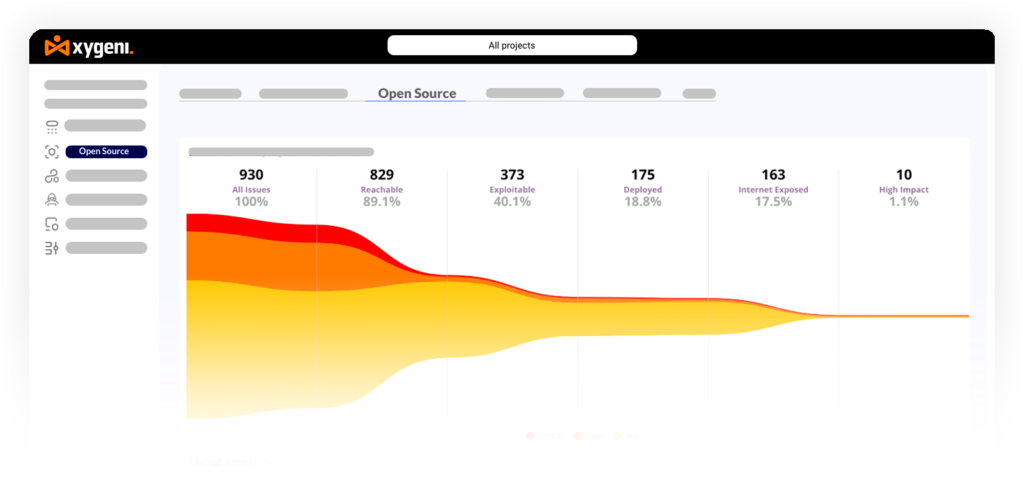

Xygeni plays a dual role in your development lifecycle. First, it identifies and prevents the most common AI-generated code security risks. Then, it uses AI itself to help you fix those problems faster and more efficiently.

At its core, Xygeni continuously scans all AI-generated code, infrastructure as code, dependencies, and pipeline configurations. It flags hardcoded secrets, insecure settings, outdated packages, and permission issues before they ship to production. But what makes it even more powerful is its automation layer.

With the Xygeni Bot, teams can automate remediation for SAST, SCA, and secrets issues. The bot runs:

- On every pull request to keep code clean at merge time

- On-demand for manual control

- On daily schedules to reduce backlog

When it detects a fixable issue, it automatically creates a pull request with the suggested changes. Developers simply review and approve, staying focused on delivering features instead of fixing security gaps manually.

For organizations with strict privacy and governance needs, Xygeni also supports AI Auto-Fix with customer-provided models. This means you can use models from OpenAI, Claude, Gemini, or even run them locally through OpenRouter, without sharing your code externally. This keeps your intellectual property secure while benefiting from the speed of AI-assisted remediation.

In short, Xygeni doesn’t just secure AI for coding, it supercharges it. You get the productivity of vibe coding without the risk, and the power of AI without compromising control.

Conclusion: Don’t Just Code Fast, Code Smart

Vibe coding and AI for coding are here to stay, and they’re transforming the way developers build, test, and deploy software. But as coding speeds up, security stakes grow higher. Relying blindly on AI-generated code introduces silent vulnerabilities and compliance risks that may only surface in production.

To truly unlock the benefits of AI-driven development, security must evolve alongside it. Xygeni empowers teams to enjoy the creative freedom of vibe coding while embedding safety at every stage, from code to cloud. It detects hidden threats, automates remediation, and integrates AI responsibly so your workflows remain fast and secure.

With Xygeni, AI becomes a trusted partner, not just for writing code, but for keeping it secure.

About the Author

Written by Fátima Said, Content Marketing Manager specialized in Application Security at Xygeni Security.

Fátima creates developer-friendly, research-based content on AppSec, ASPM, and DevSecOps. She translates complex technical concepts into clear, actionable insights that connect cybersecurity innovation with business impact.