Shadow AI is no longer just employees using an unapproved chatbot. Today, shadow AI often includes unapproved AI agents running with real permissions: repo access, CI/CD tokens, file read/write, and messaging APIs. In other words, shadow AI can behave like shadow automation, and that’s why it increases security risk faster than most teams expect.

Here’s the security gap: shadow AI expands your attack surface without changing your controls. For instance, one agent can ingest untrusted content, follow hidden instructions, and then call tools that touch production systems. Consequently, the risk is not only data leakage; it’s also unauthorized actions executed at machine speed.

If you want a practical definition you can quote internally: Shadow AI is any AI capability used without governance that can access sensitive data or trigger real actions. Accordingly, the right response is not “ban AI.” Instead, you need visibility, least privilege, skill governance, and tool-call auditing to control shadow AI without slowing delivery.

What is Shadow AI?

Shadow AI is the use of AI tools, models, or agent workflows without formal approval, monitoring, or governance by IT or security. That includes unsanctioned chatbots, browser extensions, IDE copilots, and local or hosted agents connected to enterprise tools. Most importantly, shadow AI creates blind spots in data handling, access control, and auditability. Therefore, it can turn routine developer activity into a security and compliance risk.

Shadow AI vs Shadow IT vs Agentic Shadow AI

Shadow AI overlaps with shadow IT, but it behaves differently. Above all, AI systems can learn from inputs and scale decisions, while agents can also execute actions through tools and tokens. As a result, teams need a clearer model of what they are defending.

| Dimension | Shadow IT | Shadow AI | Agentic Shadow AI |

|---|---|---|---|

| What it is | Unapproved software or services | Unapproved AI tools used for work | Unapproved AI agents that can call tools and execute actions |

| Typical example | Unsanctioned SaaS, plugins, scripts | Personal chatbot or AI editor used with company data | Agent connected to repos, CI/CD, email, tickets, cloud APIs |

| Main risk | Data exposure, compliance gaps, unmanaged access | Data leakage, policy bypass, untracked model usage | Unauthorized actions, privilege misuse, tool-driven exfiltration |

| Risk speed | Moderate | Fast | Very fast (automation + credentials) |

| Attack paths | Credential misuse, insecure configs, OAuth abuse | Prompt injection, sensitive prompt logging, data retention issues | Tool injection, skill supply chain, browser-to-local takeover, token pivoting |

| Visibility challenge | Shadow apps and unknown vendors | Unknown AI usage + unclear data flows | Unknown AI usage + hidden tool calls + unclear attribution |

| Best first control | SaaS discovery + access governance | Approved AI catalog + redaction rules + logging | Agent inventory + least privilege + tool-call logging |

| What “good” looks like | Approved catalog, SSO, logging, vendor review | Approved AI catalog, retention controls, safe data handling | Approved agent runtime, allowlisted skills, scoped tokens, audited actions |

Why OpenClaw Agent Risks Matter to DevSecOps

OpenClaw agent risks matter because agents change the security model from “data in, text out” to data in, actions out. In a shadow AI scenario, that means a single developer can run an ungoverned agent that connects to repos, CI/CD, cloud APIs, and messaging tools. As a result, shadow AI turns into shadow automation with credentials.

That shift breaks common assumptions. For example, teams often treat “local agents” as low risk because they run on a laptop or bind to localhost. However, recent OpenClaw incidents show that the browser can become the bridge, tokens can be exposed, and tool gateways can be taken over, even in “local-only” setups.

In short, once an agent can call tools, your threat model must include token theft, tool invocation abuse, skills supply chain compromise, and indirect injection. Otherwise, you will miss the riskiest part of shadow AI.

The most severe OpenClaw incidents (confirmed)

1) CVE-2026-25253 — 1-Click takeover / RCE path via malicious link

Impact: Maximum (high likelihood + high impact)

What it enabled (high level):

- OpenClaw could obtain a

gatewayUrlfrom a query string and automatically open a WebSocket connection without prompting, sending a token value in the process. - That token exposure can enable gateway takeover and downstream abuse depending on permissions and configuration.

Why it’s so severe:

It turns “click a link” into “agent toolchain compromise,” which is exactly how shadow AI becomes shadow automation with credentials.

2) ClawJacked — drive-by website → localhost WebSocket brute force → full agent hijack

Impact: Very high (silent + scalable pattern)

What it enabled (high level):

A malicious website could open a WebSocket connection to localhost and target OpenClaw’s local service.

With weak password-based auth, attackers could brute force the password and gain trusted access, enabling full control of the agent instance.

Why it’s so severe:

It breaks the “localhost is safe” assumption. In practice, the browser becomes the bridge, so “local-only” is not a real boundary.

3) Skills ecosystem abuse: ToxicSkills + malicious ClawHub skills (agent skills supply chain)

Impact: High to maximum (scale + persistence)

What it enabled (high level):

Malicious or vulnerable skills can behave like dependencies: installed from a marketplace, updated independently, and often operating with agent-level permissions.

Independent research analyzing 3,984 agent skills found 13.4% (534) had at least one critical issue, including malware distribution, prompt injection, and exposed secrets.

Real-world examples show attackers shipping crypto-themed “skills” to push malware or steal sensitive data via social engineering and obfuscated commands.

Why it’s so severe:

This is supply chain risk, but for agents: a “skill” can inherit the agent’s ability to read files, access secrets, or execute tool actions.

| Incident | Attack type | User interaction | Primary consequence | Sources |

|---|---|---|---|---|

| CVE-2026-25253 | Malicious link → query-string gatewayUrl → token exposure → gateway takeover / RCE path |

1-click (UI:R) | Gateway compromise; potential downstream execution depending on permissions |

NVD (NIST) INCIBE-CERT The Hacker News |

| ClawJacked | Drive-by site → localhost WebSocket → brute force → agent hijack | Visit a site | Full local agent takeover; log/config/data access |

Oasis Security TechRadar The Hacker News |

| ToxicSkills / malicious ClawHub skills | Skills marketplace as supply chain (malware, injection, secrets exposure) | Variable (install/use skill) | Agent-level compromise via inherited permissions and malicious skill behavior |

Tom’s Hardware The Hacker News |

Use case: reducing OpenClaw-style Shadow AI risk with a DevSecOps workflow

OpenClaw is a useful case study because it shows how shadow AI becomes real operational risk: an agent runs “locally,” connects to repos and pipelines, and suddenly a browser visit, a token, or a third-party skill can turn into a takeover. The goal is not to ban agents. Instead, it’s to make sure agent-driven work flows through the same controls you already trust for code and supply chain.

Step 1: Treat agent “skills” like dependencies, not like harmless add-ons

Most shadow AI incidents don’t start with a sophisticated exploit. They start with adoption: a developer installs an agent, adds a couple of skills, and gives it access “so it works.” From that moment, the agent ecosystem behaves like a package ecosystem: skills update, helper scripts appear, and untrusted code can enter quietly.

So the first move is to shift mindset: anything the agent can install or execute is part of your supply chain. In a Xygeni workflow, that means you don’t wait for a breach report. You focus on earlier signals that a component is risky or outright malicious, so adoption stops before it spreads across repos and developer machines.

What changes in practice

- Teams stop copy-pasting “working agent configs” without review

- New skills and helper packages get treated like dependency intake, not personal tooling

Step 2: Make PRs the control point, even when an agent wrote the change

Agents accelerate change. That’s the point. However, the OpenClaw story shows how quickly “small changes” become security events once tokens and tool gateways are involved. Therefore, relying on “developer caution” isn’t enough.

Instead, route agent output through pull requests and enforce scanning at PR time. That way, even if an agent proposes a dependency bump, a build script tweak, or a CI workflow edit, the PR becomes the choke point where policy is applied. Xygeni fits naturally here because it’s built for CI/CD and PR workflows, so risky changes are caught before they merge.

Typical agent-driven changes you want gated

- Dependency upgrades and lockfile churn

- Build scripts and install hooks

- CI workflow edits (permissions, secrets usage, network calls)

- New automation steps that run with elevated rights

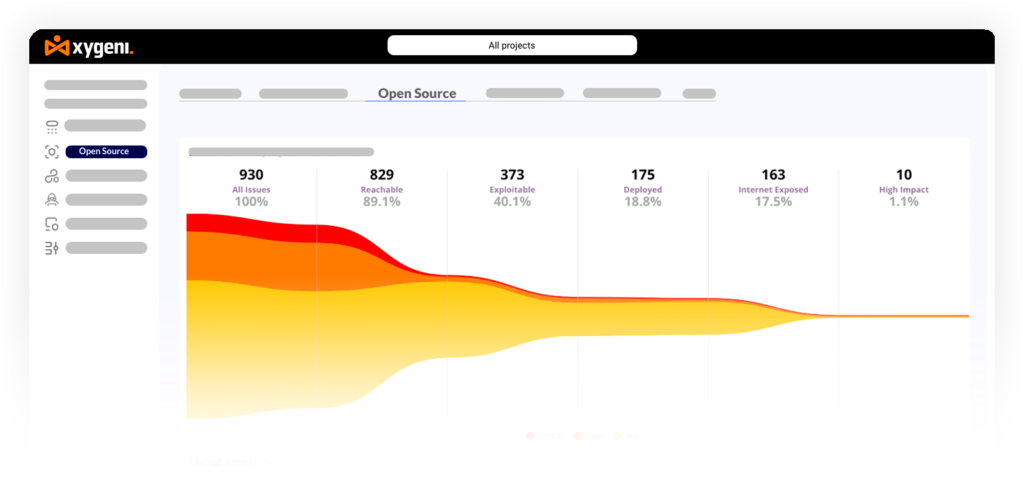

Step 3: Prioritize what attackers will use, not just what scanners find

Shadow AI increases volume. More automation means more dependency drift, more configuration churn, and more “small changes” per week. Consequently, teams can drown in findings unless prioritization maps to real exploitability.

This is where exploit context matters. If one issue is likely to be exploited and another is not, your workflow should reflect that difference. Xygeni’s prioritization approach is designed for this reality: reduce noise by focusing remediation on what is most likely to matter in practice.

A simple rule that scales

- Block or fast-track fixes for the issues with the highest real-world risk

- Defer low-signal noise so engineers keep shipping safely

Step 4: Stop assuming “localhost is safe”

ClawJacked works as a lesson because it attacks an assumption many teams still carry: “if it’s local, it’s fine.” In reality, local gateways and local UIs still need production-grade thinking. The browser is part of the threat surface, and “local-only” is not a boundary you can depend on.

So you harden local services like you would any sensitive interface:

- Strong authentication (not just a human-chosen password)

- Rate limits and lockouts

- No auto-connect behavior that trusts unvalidated inputs

- Restrict who can connect and from where

While Xygeni is not a localhost firewall, it helps reduce the practical impact of “local bypass” patterns by moving enforcement to the pipeline and platform. When controls live in CI/CD and security posture policies, shadow AI is less likely to bypass them “because it was local.”

Step 5: Watch for abnormal behavior that looks like supply chain abuse

OpenClaw-style incidents often share a common failure mode: something changes quietly, then workflows start behaving differently. That’s why anomaly-focused signals matter. If an environment suddenly starts pulling unusual dependencies, publishing versions rapidly, or showing patterns consistent with supply chain abuse, you want that flagged early.

Xygeni’s anomaly detection and early warning framing aligns with that goal: surface suspicious patterns early, before they become repeated incidents across teams.

Signals worth alerting on

- Sudden spikes in dependency changes across repos

- New packages/skills with low reputation or odd update patterns

- Unexpected CI steps that download runtimes or execute scripts

- Unusual network calls from build contexts

The takeaway

This workflow is intentionally not “agent-specific.” It’s a DevSecOps pattern that works for shadow AI at scale: treat skills like dependencies, gate changes at PR/CI time, prioritize what’s exploitable, stop trusting localhost by default, and detect abnormal supply chain behavior early. That is how you reduce shadow AI risk without slowing delivery.

Shadow AI Security: What This Means for DevSecOps Teams

Shadow AI is no longer a side issue. In 2026, it increasingly means agents with real permissions, which turns simple mistakes into tool-driven incidents. OpenClaw is the clearest reminder: the risk isn’t only what the model “says,” it’s what the agent can do with tokens, gateways, and skills.

Accordingly, the most effective response is practical, not theoretical. Treat agent skills like dependencies, route agent output through PR and CI/CD guardrails, and stop assuming “localhost is safe.” At the same time, prioritize what is actually exploitable so teams can keep shipping without drowning in noise.

Ultimately, you don’t need to ban agents to control shadow AI security. You need to make sure agent-driven workflows can’t bypass the same supply chain and delivery controls that already protect your software lifecycle.