Agentic AI is reshaping the way software is built, tested, and secured. Unlike traditional models that respond to a single prompt, agentic AI systems act with autonomy. They observe, plan, act, and adjust without waiting for direct instructions. As a result, they can write code, review pull requests, fix errors, and even handle tasks normally assigned to developers. This shift is driving new interest in AI coding agents and the rapid growth of every major AI agent platform.

However, autonomy brings new risks. An ungoverned agent can misuse tools, expose secrets, modify files incorrectly, or apply unsafe dependency upgrades. Therefore, understanding how agentic AI behaves, how AI agents operate in real workflows, and how AI agent platforms enforce safety is essential for DevSecOps and AppSec teams.

This guide explains how agentic AI works, how it fits into modern engineering processes, and how to secure it at every stage of the software lifecycle.

What Is Agentic AI?

Agentic AI refers to AI systems that operate with a goal and can take autonomous actions to achieve it. Instead of simply predicting text, the system executes multi-step tasks, calls external tools, writes and edits code, evaluates its own results, and continues until the job is done.

Key traits of agentic AI

- Goal-directed behavior

- Multi-step reasoning and planning

- Autonomous tool use (shell, APIs, editors, tests)

- Self-correction and reflection loops

- Long-running workflows without human oversight

Moreover, these abilities move AI from an “assistant” to an “actor.” Consequently, autonomy introduces new responsibilities for engineering teams. As a result, safety must be considered from the beginning, especially when agents interact with code, infrastructure, or production workflows.

Agentic AI vs Traditional AI System

| Feature | Traditional AI | Agentic AI |

|---|---|---|

| Interaction | Prompt → Output | Multi-step execution |

| Autonomy | None | Yes |

| Tool Use | Limited | Core capability |

| State | Stateless | State-aware |

| Risk Level | Moderate | High (runs real actions) |

Agentic AI is not a bigger LLM. It’s a system designed to do things, not just say things.

How AI Agents Work (The Agentic Loop Explained Clearly)

Every AI agent follows the same loop:

while not goal_reached:

observe()

plan()

act()

reflect()What this means in practice

The agentic loop gives an AI system the ability to move through tasks step by step. To clarify, each stage has a specific role:

- observe(): read the environment, gather logs, inspect files

- plan(): generate a set of actionable steps

- act(): call APIs, run commands, modify code, or update data

- reflect(): check output, analyze errors, and decide the next step

Because this loop repeats until a goal is achieved, an agent may interact with tools dozens or hundreds of times. Consequently, small misconfigurations can produce big impacts.

Agentic AI in Software Development

Agentic AI is transforming the engineering workflow far more deeply than code-completion tools ever did. Instead of suggesting a few lines, an agent can now:

- Write multi-file features

- Produce tests and fix failed ones

- Review pull requests

- Identify vulnerabilities

- Refactor legacy codebases

- Upgrade dependencies

- Update documentation

- Orchestrate CI/CD tasks

This is where AI coding agents come in.

AI Coding Agent: How Autonomous Systems Write, Fix, and Review Code

An AI coding agent is an autonomous system that reads code, writes changes, runs tests, and adjusts its strategy based on the results. In contrast to a traditional code assistant that waits for a prompt, an AI coding agent creates its own plan and continues working until the task is complete.

What an AI coding agent can do

In practice, a coding agent may:

- Modify multiple files across a repository

- Execute commands such as tests, builds, or linters

- Fix compilation or runtime errors

- Retry actions after failure and choose a safer path

- Suggest and apply patches based on project context

- Create pull requests automatically for review

Meanwhile, several tools already support this behavior, including Claude Code, Replit Agents, Cursor IDE, GitHub’s upcoming agent APIs, and VS Code extensions designed for agentic workflows.

Benefits

These capabilities bring clear advantages:

- Faster iterations across the development cycle

- Less manual work for repetitive tasks

- Continuous improvement loops that help teams ship faster

Security risks (critical for AppSec)

However, autonomy introduces new risks. For instance:

- An agent may apply unsafe file modifications

- A shell command could run in the wrong environment

- Sensitive logs might leak secrets accidentally

- Secure config files can be overwritten

- Dependency upgrades might introduce regressions

- Incorrect model output can be applied without validation

Because coding agents act instead of assist, they require strong guardrails, strict permissions, and continuous monitoring. This ensures that the benefits of agentic AI do not introduce new vulnerabilities into the SDLC.

What Is an AI Agent Platform?

An AI agent platform provides the runtime, orchestration, and safety layers needed to operate agentic AI reliably. It manages planning, memory, tool execution, guardrails, and environment control so that agents can complete multi-step tasks. In other words, it is the operating system that allows agentic AI to function beyond a single prompt.

Several leading platforms already define this space. For example:

- OpenAI Agents API

- LangGraph (LangChain)

- Google Workspace Agents

- UiPath AI Agents

- Replit Agents

- n8n AI Agent

These platforms all follow the same general pattern, although their safety models vary significantly.

What a good AI agent platform should provide

A strong platform includes robust engineering fundamentals as well as AppSec considerations. For instance, a complete platform usually offers:

- Tooling: a sandboxed shell, file operations, and API access with strict permission boundaries

- Planning modules: LLM-driven workflow creation that can break down goals into actionable steps

- Memory: short-term and long-term context to support multi-step execution

- Policies and guardrails: enforcement mechanisms that block unsafe actions and restrict tool behavior

- Observability: logs, traces, diffs, and evaluations that make agent actions transparent

- Versioning: reproducibility for agent sessions, workflows, and tool configurations

Beyond platform features, authoritative guidelines reinforce the importance of predictability and control. For example, the NIST AI Risk Management Framework highlights traceability and governance as key factors when deploying autonomous systems. Similarly, the OWASP Top 10 for LLM Applications identifies common risks in agentic workflows, including insecure tool use, excessive permissions, and plugin misconfigurations.

Because many platforms focus primarily on automation, engineering teams often require stronger safeguards. This is especially important when an agent generates code, modifies files, or interacts with CI and production systems. As a result, policies, guardrails, and dependency governance become essential components of any safe agentic AI workflow.

Agentic AI Use Cases for Engineering & DevSecOps

| Category | Agentic AI Use Cases |

|---|---|

| Developer Productivity |

Build small features end to end Improve code quality Generate tests automatically Complete TODOs in context Document APIs and components |

| DevOps Automation |

Run checks before merges Clean dependency issues Manage build workflows Update CI configurations safely |

| AppSec Automation |

Fix SAST and SCA findings Restrict risky tool calls Detect unsafe connectors Evaluate dependency upgrades Validate policies before merge |

Agentic AI Security Risks

Most enterprise articles avoid the risk discussion. However, for engineering and AppSec teams, it is the most important part of adopting agentic AI safely. Below, you will find a more technical breakdown based on real behaviors observed in autonomous agents.

1. Tool Misuse (Shell, API, File System)

Agentic AI can run the wrong command at the wrong time.

For example:

A coding agent runs npm audit fix to “improve security,” but unintentionally upgrades a major dependency to a breaking version. The result is a production outage.

Moreover, an agent may execute a diagnostic command that prints environment variables into a log. This exposes secrets and expands the attack surface.

This maps to:

OWASP LLM05: Insecure Output Handling

OWASP LLM11: Unauthorized Code Execution

2. API Key Abuse

Many agents operate with overly broad credentials. Consequently, if an API key grants full write access, the agent inherits the same power. This turns a misplaced command into a system-wide modification.

This maps to:

OWASP LLM09: Excessive Agency

3. MCP / API Misconfiguration

Misconfigured connectors often become silent risks. In particular, missing origin validation in MCP or API integrations can allow an agent to access internal tools or sensitive secret stores.

This maps to:

OWASP LLM03: Insecure Plugin/Extension Handling

4. Dependency Upgrades Without Verification

Agents often upgrade dependencies because “a new version exists.”

However, not every new version is safe.

This is where EPSS scoring, reachability, and Remediation Risk become critical:

- EPSS indicates how likely a vulnerability is to be exploited

- Reachability checks whether vulnerable code paths actually run

- Remediation Risk identifies whether a version change may introduce breaking behavior

Without these checks, agent autonomy becomes unsafe and unpredictable.

5. Infinite or Unbounded Loops

Agents can also enter loops that run indefinitely. For instance, a loop may:

- Spam API calls

- Delete and re-write files repeatedly

- Trigger rate limiting or outages

- Flood logs with sensitive data

This maps to:

OWASP LLM02: Unbounded or Uncontrolled Resource Consumption

Moreover, many of the security challenges introduced by agentic systems also appear in broader AI security practices. For a deeper overview of these foundations, you can read our guide on AI cybersecurity and how modern teams mitigate model-driven risks.

Agentic AI Architecture

| Layer | Role | Examples | Risks |

|---|---|---|---|

| LLM | Reasoning | GPT, Claude, Gemini | Hallucinations, unsafe plans |

| Agent Runtime | Autonomy loop | LangGraph, ReAct | Infinite loops, tool misuse |

| Tools and APIs | Execution | Shell, Git, Databases, CI tools | API key abuse, privilege escalation |

| Codebase | Project files | Source files, configuration files | Incorrect edits, regressions |

| CI/CD | Delivery | GitHub, GitLab, Jenkins | Unsafe merges, environment escape |

Securing Agentic AI in DevSecOps

Adopting agentic AI safely requires a layered strategy. Therefore, teams should combine guardrails, permission scoping, safe dependency management, and continuous monitoring to keep autonomy predictable.

1. Guardrails

Guardrails provide the first layer of protection. For example, they define:

- Allowed tools

- Allowed origins (MCP)

- Input validation rules

- Output sanitization

- File access scope

Guardrails must run both locally and in CI/CD.

2. Permission Scoping

In addition to guardrails, permission scoping limits what an agent can reach. For example:

- Short-lived tokens

- Principle of least privilege

- Read-only contexts for most actions

3. Safe Dependency Management

Before agents upgrade libraries, the system must:

- Check EPSS

- Evaluate reachability

- Run Remediation Risk

- Prevent breaking changes

This is one of the most overlooked risks.

4. Continuous Monitoring

Finally, strong observability keeps autonomy under control. Teams should track:

- Agent actions

- File edits

- Tool calls

- Logs and diffs

- Policy triggers

- PR creation

Without observability, autonomy becomes chaos.

How Xygeni Enables Safe Agentic AI

Agentic AI brings speed and autonomy to development, but it also increases the need for clear boundaries. To support this shift, Xygeni adds safety controls directly into the SDLC so teams can use agentic AI without giving up stability or trust. Each capability aligns with how developers already work, making safety part of the workflow instead of an extra step.

Guardrails

Guardrails provide consistent policy enforcement across repositories, pull requests, CI pipelines, and local environments. In addition, they help ensure that agents operate within defined limits and avoid actions that may cause regressions or expose sensitive data.

Xygeni Bot

The Xygeni Bot brings automated remediation into the development process while staying within strict permissions. It:

- Works through Git

- Creates pull requests automatically

- Follows scoped access rules

- Never executes outside approved paths

As a result, developers retain control while reducing manual workloads.

AI Auto-Fix with Customer Models

Some teams require full privacy over source code. For this reason, Xygeni supports customer-provided AI models. The CLI connects directly to the configured model so organizations can apply AI-generated fixes without sending data outside their environment.

Remediation Risk and Reachability

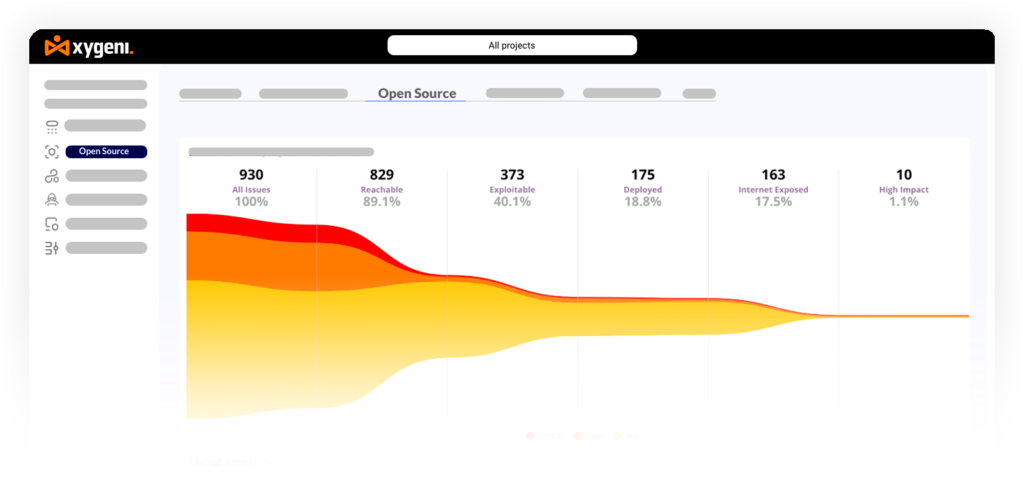

Dependency upgrades can be risky, especially when done autonomously. Remediation Risk evaluates which versions are safe to adopt, while Reachability identifies whether a vulnerability can actually be triggered. Together, these features reduce regressions and support safer agent-driven upgrades.

When combined, these capabilities give teams a practical foundation for adopting agentic AI while keeping control of code quality, integrity, and security.

FAQ: Agentic AI

What is agentic AI?

Agentic AI is a type of artificial intelligence that can plan, act, and complete multi-step tasks autonomously using tool calls and structured reasoning. In fact, it can operate through several steps without waiting for new instructions.

What are AI agents?

AI agents follow an observe, plan, act, and reflect loop. Consequently, they can break down goals, choose actions, and adjust their behavior with minimal guidance.

What is an AI coding agent?

An AI coding agent writes, edits, tests, and reviews code while adjusting its approach based on errors or feedback. Moreover, it can retry actions and refine its plan during each loop.

What is an AI agent platform?

An AI agent platform provides the orchestration, sandboxing, memory, and tool integrations required to run agentic AI safely at scale. Furthermore, it supplies guardrails and observability to keep actions predictable.

Is agentic AI safe?

Agentic AI can be safe when combined with guardrails, scoped permissions, dependency governance, and strong AppSec controls. Therefore, limiting what agents can access or modify is essential for secure adoption.

Final Thoughts: Secure Agentic AI by Design

Agentic AI marks a major shift in how software teams work. It improves developer productivity, automates complex tasks, and introduces new ways to manage workflows. However, autonomy also brings added responsibility. Agents can write code, modify configurations, or trigger builds, therefore safety must be built into the process from the beginning.

Moreover, secure adoption depends on predictable boundaries. By adding guardrails, version governance, runtime checks, and automated remediation, organizations can use agentic AI with confidence. The goal is not to limit the agent, but rather to provide the structure it needs to operate safely and consistently.

As a result, agentic AI becomes a practical and reliable partner. In addition, when these controls run inside the same workflows that developers already use, teams gain speed without increasing risk.

In summary, with Xygeni’s ASPM capabilities embedded across code, pipelines, and agent workflows, agentic AI supports engineering goals while protecting the SDLC end to end.

About the Author

Written by Fátima Said, Content Marketing Manager specialized in Application Security at Xygeni Security.

Fátima creates developer-friendly, research-based content on AppSec, ASPM, and DevSecOps. She translates complex technical concepts into clear, actionable insights that connect cybersecurity innovation with business impact.