Search engines were built to index content. However, attackers use them to index your mistakes. The query allintext:login filetype:log may look harmless. In reality, it is one of the simplest ways to discover exposed log files containing authentication flows, credentials, tokens, and internal infrastructure data.

If Google can see those logs, attackers can too. Once indexed, exposure becomes inevitable. Moreover, when credentials appear in a publicly accessible file, the breach is already in motion.

1. Why allintext:login filetype:log Is More Dangerous Than It Looks

A Google dork is a search query that uses advanced operators to locate sensitive or misconfigured content indexed by search engines. It does not exploit Google. Instead, it exploits your exposure.

This query combines two operators:

- allintext: returns pages where all terms appear in the body text

- filetype:log restricts results to

.logfiles

Therefore:

allintext:login filetype:log

Means: “Show me log files that contain the word login.”

At first glance, that seems trivial. However, in practice, it often returns:

- Publicly exposed web server logs

- CI/CD logs uploaded as artifacts

- Debug logs accidentally committed to repositories

- Application logs with plaintext credentials

This is not a search engine bug. Instead, it is a data exposure vulnerability caused by misconfiguration. Google simply indexed what was publicly accessible.

2. What Attackers Actually Find in Exposed Log Files

When attackers run allintext:login filetype:log, they are not browsing randomly. They are looking for authentication traces.

2.1 Plaintext Credentials

Logs frequently contain entries such as:

POST /login username=admin password=SuperSecret123or

Authorization: Basic YWRtaW46cGFzc3dvcmQ=

Or even SMTP credentials:

smtp_user=mailer

smtp_pass=ProdMailPass!Logging authentication payloads is one of the fastest ways to leak production credentials. Consequently, a single exposed log file can invalidate your entire access control model.

2.2 Session Tokens & JWTs

Even when passwords are not logged, tokens often are.

For example:

Authorization: Bearer eyJhbGciOiJIUzI1NiIsInR5cCI6IkpXVCJ9...

Set-Cookie: session=abc123xyz789;

Generated API token: sk_live_ABC123SECRETA valid JWT or session cookie inside a .log file can enable:

- Session hijacking

- Privilege escalation

- Lateral movement across internal systems

In other words, tokens in logs turn debugging output into an authentication bypass vector.

2.3 CI/CD Artifacts

Build logs are especially dangerous. In fact, CI/CD systems often print environment variables during build steps.

Attackers frequently discover:

Containing lines such as:

AWS_ACCESS_KEY_ID=AKIA...

DATABASE_URL=postgres://admin:password@internal-db:5432/appIf CI/CD artifacts are public, then secrets are public. The Google dork simply accelerates discovery.

2.4 Cloud & Infrastructure Data

Exposed logs often reveal:

- AWS access keys

- Azure storage connection strings

- Internal service URLs

- Database credentials

- Redis endpoints

Even if credentials are rotated later, the attacker now possesses:

- Infrastructure mapping

- Naming conventions

- Target intelligence for future attacks

Therefore, exposed logs provide both access and reconnaissance.

3. How These Logs Become Public in the First Place

Logs do not magically appear in Google. They become indexed because they were publicly reachable.

3.1 Misconfigured Web Servers

Common patterns include:

/logs/directories accessible without authentication- Directory listing enabled

- Nginx or Apache serving raw

.logfiles

If a log is reachable over HTTP, it is indexable.

3.2 CI/CD Artifact Exposure

Typical mistakes:

- Public artifacts enabled in GitHub Actions

- Logs uploaded to open S3 buckets

- Pipeline traces accessible without authentication

A pipeline that stores logs in a public bucket effectively publishes its secrets.

3.3 Debug Mode in Production

Framework defaults can be dangerous:

APP_DEBUG=trueAdditionally, excessive request logging may print:

- Headers

- Tokens

- Full request bodies

Debug logging in production transforms your application into a credential exporter.

3.4 Docker & Container Logs

Containerized environments introduce new exposure paths:

- Logs mounted into shared volumes

- Sidecars exporting logs to unsecured endpoints

- Log dashboards with public access

If container logs are exposed via HTTP or open storage, they are searchable. Eventually, they are indexed.

4. Realistic Attack Flow: From Dork to Breach

A typical attack chain looks like this:

Attacker runs:

allintext:login filetype:log- Finds exposed

.logfile - Extracts:

- JWT token

- Basic Auth header

- Database connection string

Attempts authentication against:

- API endpoints

- Admin panels

- Internal services

If authentication succeeds, the attacker can:

- Escalate privileges

- Move laterally

- Access CI/CD

- Compromise the supply chain

What started as a search query becomes:

- Session hijacking

- Internal credential stuffing

- Pipeline takeover

- Artifact poisoning

All from a publicly indexed log file.

5. Why Logging “Too Much” Is an AppSec Problem

Logging is not neutral. Instead, it creates a secondary data store.

If you log sensitive data, you effectively create a second copy of your secrets.

However, logs are often excluded from threat modeling. Under STRIDE, this clearly maps to:

Information Disclosure

Therefore, Secure SDLC practices should treat logs as:

- Security-relevant artifacts

- Sensitive assets

- Infrastructure components requiring protection

If your threat model ignores logs, it is incomplete.

6. How to Prevent Credential Leakage in Log Files

6.1 Stop Logging Secrets

Never log:

- Passwords

- Tokens

- API keys

- Session IDs

- Authorization headers

Even in debug mode.

Whenever possible, implement automatic redaction.

6.2 Structured & Safe Logging

Use structured logging with masking and filtering.

Example (Node.js):

const logger = require('pino')({

redact: ['req.headers.authorization', 'body.password']

});Example (Python):

class RedactFilter(logging.Filter):

def filter(self, record):

record.msg = record.msg.replace("password=", "password=***")

return TrueThe key principle is simple: secrets must never reach the log sink.

6.3 Lock Down Log Storage

Security controls should include:

- Disable directory listing

- Protect

/logs/paths with authentication - Restrict bucket access

- Apply retention policies

- Encrypt logs at rest

Logs must never be publicly reachable via HTTP.

6.4 CI/CD Guardrails

Manual reviews are insufficient. Instead, implement automated controls:

- Secret scanning of logs before artifact publication

- Fail builds if tokens are detected

- Prevent artifact uploads containing credentials

- Hash validation for artifacts

CI/CD should block exposure before indexing happens.

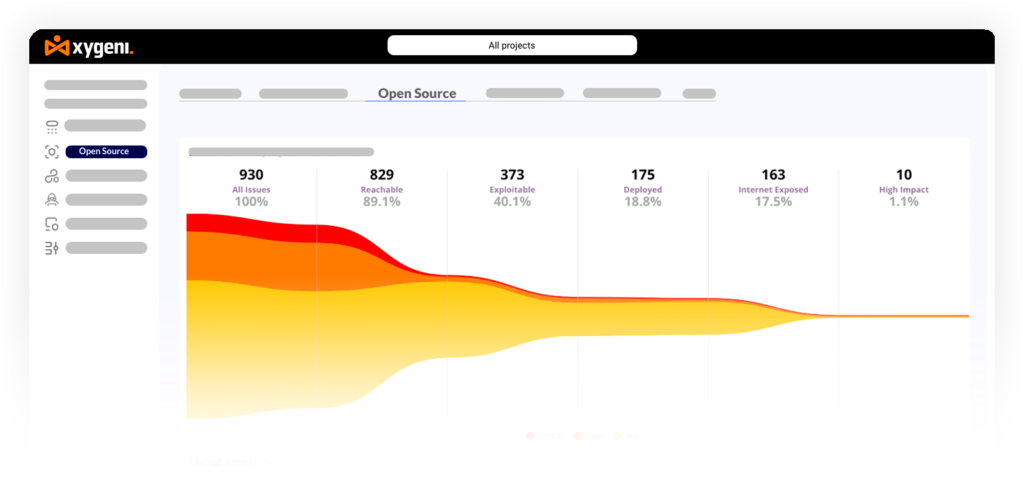

7. How Xygeni Prevents allintext:login filetype:log Incidents

The problem is not the Google dork. The problem is exposure. Therefore, prevention must happen before indexing.

7.1 Secret Detection in Logs and Artifacts

Xygeni scans:

- Application logs

- CI/CD job traces

- Build artifacts

- Docker layers

- Serialized outputs

If credentials, tokens, or sensitive values appear in .log files, Xygeni flags them immediately.

7.2 CI/CD Guardrails That Block Exposure

Instead of relying on manual reviews, Xygeni enforces security at the pipeline level:

dotnet xygeni enforce --rules secrets,artifacts,logs --fail-on-riskThis:

- Fails builds when secrets appear in logs

- Blocks artifact publication

- Prevents accidental public exposure

- Stops unsafe merges before reaching main

If a CI job prints a token, the pipeline fails.

No indexing.

No exposure.

No incident.

7.3 Shift-Left Protection Before Google Sees It

Timing matters.

Instead of reacting to:

allintext:login filetype:logXygeni stops the issue:

- At commit time

- During pull request validation

- During pipeline execution

- Before artifact publication

If the log never becomes public, Google never indexes it.

Final Takeaway: If Google Can Index It, Attackers Already Did

Logs are not harmless. In fact, they are rarely temporary. By default, they are not private. Therefore, every log file should be treated as a security-relevant asset, not just debugging output.

If sensitive data reaches a .log file and becomes publicly accessible, it immediately turns into an attack surface. Moreover, once indexed by a search engine, the exposure scales beyond your control.

The solution is not to stop logging. Rather, it is to log responsibly and enforce strict controls around storage and distribution. In other words, security must extend beyond the application itself and into the observability layer.

Instead:

- Stop logging secrets

- Lock down log storage

- Enforce pipeline guardrails

- Automate detection and policy enforcement

Ultimately, prevention is about timing. Because once allintext:login filetype:log returns your domain, the incident has already begun.