Your developers are shipping features faster than ever. They’re also introducing security vulnerabilities at a rate your current tooling wasn’t designed to handle.

AI coding tools don’t just accelerate development. They accelerate the introduction of insecure code. The Georgia Tech Vibe Security Radar project recorded 35 new CVEs in March 2026 alone directly attributable to AI coding tools, up from 6 in January. Researchers estimate the true count is five to ten times higher across the broader open-source ecosystem. CSA research found that 62% of AI-generated code contains design flaws or known vulnerabilities, even when developers use the latest foundational models.

This isn’t a problem you solve by asking developers to slow down. The answer is building security infrastructure that keeps pace with AI-speed development, and most teams don’t have it yet.

The Gap Most Teams Don’t See Until It’s Too Late

AI coding tools create a specific security problem that traditional AppSec infrastructure wasn’t built for: high-velocity, high-volume code with systematically different failure patterns than human-written code.

Most teams discover this gap the wrong way, when a CVE lands in production that their scanner should have caught, or when a secret committed by an AI-assisted workflow turns up in an attacker’s hands.

| Without AI-Specific Controls | With Xygeni | |

|---|---|---|

| Code vulnerabilities | Higher density, systematic failure patterns | Caught at write-time in the IDE before commit |

| Secrets exposure | 2x higher rate in AI-assisted commits | Continuous scanning + auto-revocation across all layers |

| Malicious dependencies | AI suggests packages without safety checks | Malware detection at publication time, not install time |

| Pipeline risk | No visibility into agentic tool behavior | Behavioral baselines + anomaly detection |

| Outcome | Security debt accumulates at AI speed | Coverage that scales with development velocity |

Why AI-Generated Code Fails in Specific Patterns

Before getting to the controls, it’s worth understanding why AI-generated code fails differently from human-written code, because the failure modes determine which controls actually matter.

Pattern completion over security reasoning

LLMs generate code by predicting statistically likely continuations of patterns they’ve seen in training data. When that training data includes millions of examples of insecure code, the model reproduces those patterns confidently and fluently.

The model isn’t reasoning about security. It’s completing patterns. A request to “add authentication to this endpoint” will produce code that looks like authentication and often functions like authentication, but may omit token expiration, miss authorization checks, or use a deprecated cryptographic primitive, because those omissions are statistically common in the training data.

Structural correctness without semantic safety

A December 2025 analysis by security firm Tenzai examined 15 production applications built using five major AI coding tools and found 69 vulnerabilities across the sample. Every single application lacked CSRF protection and had no security headers configured. Every tool introduced server-side request forgery (SSRF) vulnerabilities, a clean sweep of basic security failures across all 15 applications.

These aren’t edge cases. They’re systematic gaps in what AI tools optimize for: working code, not secure defaults.

Georgetown CSET separately found XSS vulnerabilities in 86% of AI-generated code samples tested across five major LLMs.

Accelerated secrets exposure

AI-assisted commits expose secrets at more than twice the rate of human-only commits. The CSA research note on vibe coding security puts the figure at 3.2% for AI-assisted commits vs. 1.5% for human-only, and public GitHub saw a 34% year-over-year increase in hardcoded credentials in 2025.

The mechanism is straightforward: developers working at AI speed often paste credentials into prompts as context, and AI tools faithfully include those credentials in generated output. Developers reviewing AI code at speed check for functional correctness, not secret exposure.

Invisible architecture flaws

Traditional security tools excel at finding known vulnerability patterns in static code: SQL injection, XSS, insecure deserialization. They struggle with design-level flaws, missing authentication on an entire API route, broken access control logic, an authorization model that assumes sequential flow but can be bypassed out of order.

AI-generated code introduces more design flaws because AI tools generate at the feature level, not the system level. The AI has no awareness of the surrounding system’s security model unless explicitly given that context, and most developers don’t think to provide it.

How to Secure AI-Generated Code in Your CI/CD Pipeline

1. Treat AI-generated code as untrusted input at the SAST layer

The most important operational change: don’t reduce SAST coverage because code came from an AI. Do the opposite. Any team with significant AI adoption should expect their finding volume to increase materially, and should configure their tooling accordingly.

In practice this means enabling SAST on every commit, not just PRs. AI tools generate code fast, and developers commit incrementally. Waiting for PR review means findings accumulate before anyone looks at them. It also means tuning SAST severity thresholds specifically for the failure modes of AI code: missing authentication and authorization checks, SSRF, CSRF, insecure deserialization, and hardcoded credentials, vulnerability classes that don’t always score as critical in CVSS but are consistently exploitable.

The central challenge is false positive rate. AI tools produce a lot of code quickly, and a high-FPR SAST generates so many findings that developers learn to ignore them. That’s the alert fatigue dynamic that defeats the purpose of scanning entirely.

Xygeni SAST was benchmarked against the OWASP Benchmark and achieved a 100% true positive rate with a 16.7% false positive rate. In an environment where AI-generated code increases finding volume, that precision is what keeps findings actionable rather than ignored. Learn more about Xygeni SAST →

2. Scan for secrets continuously, not just at commit time

Pre-commit hooks are necessary but not sufficient. Developers using AI tools at speed frequently bypass hooks, use web-based AI editors that don’t support them, or generate secrets inside CI scripts rather than application code, where hooks never trigger.

A complete secrets security posture for AI-assisted development needs pre-commit hooks for developers using local AI tools, continuous repo scanning across all branches including full historical commit coverage (valid secrets from old commits are still exploitable), pipeline log scanning (AI-generated CI scripts frequently include credentials as interpolated variables that get printed to build logs), and automatic revocation on detection, because the window between exposure and attacker discovery is often measured in hours, not days.

Xygeni Secrets Security detects over 800 secret types across repositories, pipeline logs, IaC files, and container images. The --history scan mode surfaces secrets that are technically old but still valid, a common gap in AI-assisted workflows. Secrets are obfuscated before being logged or sent to the platform, so the detection process itself doesn’t create new exposure. Auto-revocation workflows trigger on detection. Learn more →

3. Apply SCA with malware detection to AI-suggested dependencies

AI coding tools don’t just write code, they suggest dependencies. A developer asking an assistant to “add a library for JWT parsing” gets a package recommendation that may be a legitimate package, a typosquatted package with a similar name, or a package that was legitimate when the model was trained but has since been compromised.

The CSA 2025 AI-generated code vulnerability research also documents “slopsquatting”, attackers registering the hallucinated package names that AI tools invent, turning a model hallucination directly into a supply chain attack vector. Standard CVE-based SCA doesn’t catch any of these.

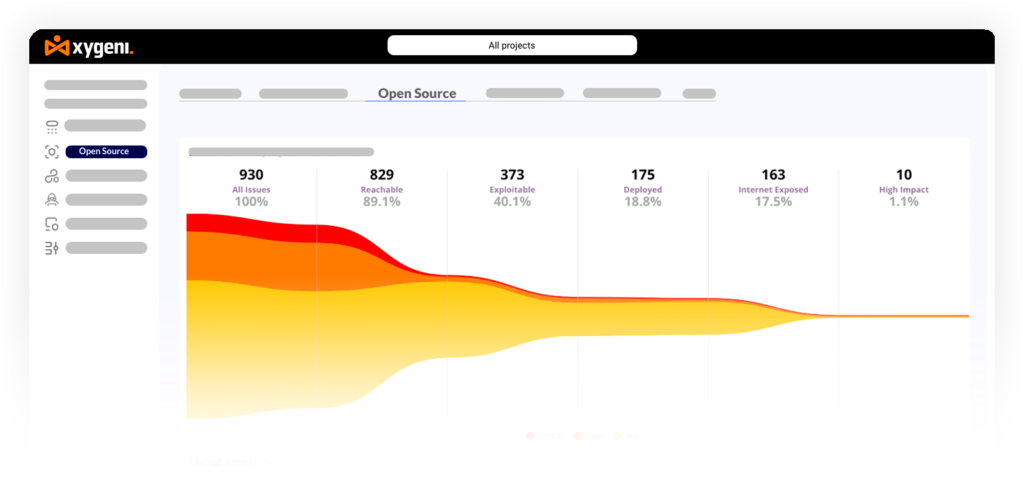

What you actually need: behavioral malware detection that flags packages with suspicious install scripts, unexpected network calls, or obfuscated code; typosquatting and slopsquatting detection that analyzes the full dependency graph for deceptively named packages; and reachability-filtered CVE scanning that distinguishes vulnerable functions that are actually called from those imported but never executed.

Xygeni SCA combines real-time malware detection via the Malware Early Warning (MEW) engine, scanning npm, PyPI, Maven, NuGet, RubyGems and other registries at publication time, not just at install time, with a Suspect Dependencies Scanner that detects typosquatting, dependency confusion, and suspicious install scripts by analyzing the full dependency graph. See how it works →

4. Enforce security guardrails in the pipeline, not just in code review

Code review is too slow and too inconsistent to be the primary security control for AI-generated code. Developers reviewing AI output under velocity pressure check functional correctness first. Security correctness, if checked at all, comes second.

Pipeline-level guardrails enforce requirements automatically: block builds that introduce new critical SAST findings above a configurable threshold, block deployment if new secrets are detected in the commit, enforce dependency policy by blocking packages that fail malware checks or aren’t pinned to an exact digest, and require SBOM generation for releases that include AI-assisted code.

The key design principle: guardrails should block or warn, not just report. A finding that doesn’t block anything teaches developers that findings can safely be ignored.

Xygeni DevAI is an agentic security copilot available as a VS Code extension and IntelliJ/JetBrains plugin that runs incremental SAST scanning as developers write code, explains exploit paths for detected vulnerabilities, and delivers fix suggestions validated by the Xygeni MCP Server for risk, policy, and breaking-change impact. Secrets detection, SCA, and IaC scanning all run in the same IDE session. Learn more →

6. Monitor for anomalous behavior from AI coding tools

AI agentic tools, tools that take autonomous actions in your environment, not just generate suggestions, introduce a new threat surface. An agentic coding tool with repository write access, pipeline trigger access, or secrets access is a high-value target if compromised.

CVE-2025-54135 (CurXecute), a remote code execution vulnerability in the Cursor AI code editor, allowed arbitrary code execution on developers’ machines without user interaction, disclosed in early 2026. The Georgia Tech Vibe Security Radar research notes that attack surfaces are expanding rapidly as AI tools become more autonomous.

Behavioral monitoring for AI tool activity in your pipeline should watch for unexpected changes to CI/CD workflow configuration files (one of the clearest signals of a compromised AI tool or a prompt injection attack), AI coding tool processes making network requests to unexpected destinations during build time, unusual access patterns to secrets stores from developer workstations, and new dependencies introduced by AI tools that weren’t present in previous builds.

| Layer | Control | Priority |

|---|---|---|

| Code | SAST on every commit, low FPR configuration | Critical |

| Code | IDE security feedback in VS Code / IntelliJ | High |

| Secrets | Pre-commit hooks + continuous repo scanning | Critical |

| Secrets | Git history scanning for valid legacy secrets | Critical |

| Secrets | Automatic revocation on detection | Critical |

| Dependencies | SCA with malware + slopsquatting detection | Critical |

| Dependencies | Reachability-filtered CVE prioritization | High |

| Pipeline | Build blocks on new critical findings | High |

| Pipeline | Dependency policy enforcement at build time | High |

| Pipeline | SBOM generation for AI-assisted releases | Medium |

| Agentic tools | Behavioral monitoring of AI tool activity | High |

| Agentic tools | Least-privilege access for AI coding tools | High |

How Xygeni Secures AI-Generated Code End-to-End

Securing AI-generated code requires coverage across the full SDLC, from the moment a developer accepts a suggestion to the moment the artifact reaches production. Point tools that cover only one layer leave gaps that AI-speed development will reliably find.

| Stage | Xygeni Capability | What It Catches |

|---|---|---|

| In the IDE | DevAI + MCP Server | Vulnerabilities at write-time, before commit |

| At commit | SAST + Secrets Security | Code flaws, hardcoded credentials, exposed API keys |

| At build | SCA with malware detection + reachability | Malicious or vulnerable AI-suggested dependencies |

| In pipeline | CI/CD Security + Anomaly Detection | Unsafe builds, agentic tool compromise, injected workflows |

| Post-deployment | DAST + ASPM | Runtime exploitability validation, unified risk posture |

The key differentiator is the intelligence layer that connects all of these. Xygeni’s MCP Server ensures that the fix suggestion DevAI generates in the IDE is evaluated for policy compliance, breaking change risk, and organizational context before it reaches the developer. AI-assisted remediation with guardrails, not with the safety off.

Final Thoughts

AI coding tools are generating a significant and growing share of enterprise code. They’re also introducing security vulnerabilities systematically at the patterns that matter most: missing auth, exposed secrets, insecure dependencies, and design flaws that static scanners miss.

The answer isn’t to restrict AI tool usage. It’s to build security infrastructure that scales with AI development velocity. The teams that get this right ship AI-assisted features faster and more safely than teams that treat AI code like human code with a slightly higher bug rate.

It isn’t. And your pipeline needs to know the difference.

👉 Start your free trial and scan your first AI-assisted repository in minutes, no credit card required.

👉 Book a demo and see how Xygeni maps to your specific AI development stack.

👉 Download the whitepaper, Secure Vibe Coding Before It Becomes Your Organization’s Biggest AI Risk.

Related reading:

About the Author

Co-Founder & CTO

Fátima Said specializes in developer-first content for AppSec, DevSecOps, and software supply chain security. She turns complex security signals into clear, actionable guidance that helps teams prioritize faster, reduce noise, and ship safer code.