Xygeni today expanded its Application Security Platform with a Model Context Protocol (MCP) server that allows AI assistants inside IDEs to execute coordinated security workflows across the entire SDLC.

Rather than introducing another AI prompt wrapper, Xygeni exposes its full security engine: SAST, SCA, Secrets, IaC, remediation intelligence, guardrails, and risk analysis, through a structured MCP interface. Any MCP-compatible copilot can invoke these capabilities directly from the developer workspace.

The result is not just AI-assisted coding, but AI-orchestrated security enforcement embedded at the source of code generation.

Turning AI Assistants into Security Executors

AI coding assistants have increased developer velocity, but they have also expanded the attack surface. Generated code often bypasses secure design reviews, dependency scrutiny, and policy enforcement until later stages of the pipeline.

The Xygeni MCP server changes that model.

Instead of waiting for CI scans, the copilot can call Xygeni tools in real time. From within the IDE, it can:

- Trigger SAST scans

- Run SCA analysis

- Secrets detection

- Malware detection

- IaC & pipeline security validation

- Generate secure fixes

- Calculate remediation risk

- Validate project guardrails

- Execute policy audits

All through structured tool calls exposed by the MCP server.

A recent demonstration in Cursor shows a single structured instruction triggering multiple coordinated actions: scanning the project, generating a secure fix for a SAST finding, computing remediation risk for a vulnerable dependency, and validating policy compliance. The prompt is only the interface; the MCP server performs the orchestration.

From Detection to Security Decision Cycles

Traditional AppSec tooling operates in fragments. Scan results generate alerts. Developers review findings manually. Risk is assessed separately. Policies are enforced at merge time.

Xygeni’s MCP server compresses this into a unified security decision cycle.

When invoked by an AI assistant, the platform can:

- Detect a vulnerability

- Propose a secure fix

- Evaluate remediation risk before applying it

- Validate guardrails aligned with organizational policy

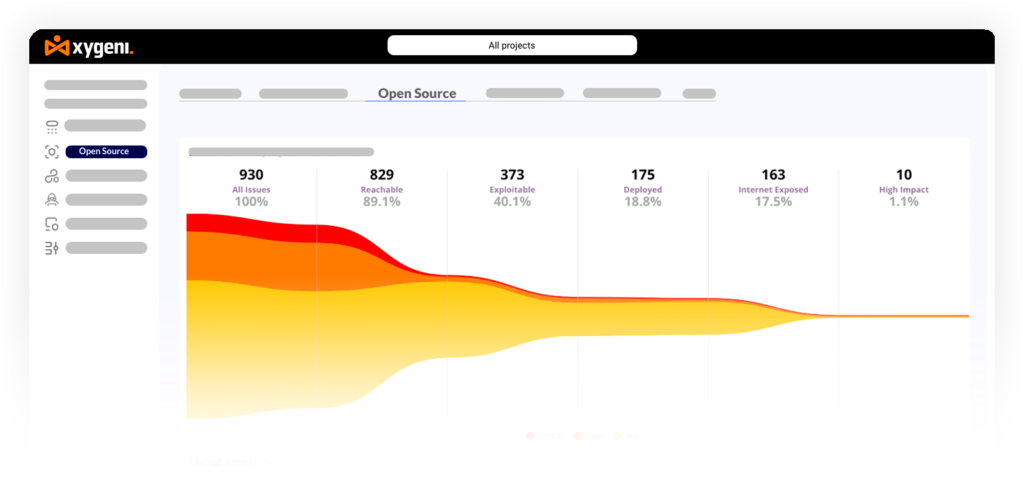

This workflow runs against the same proprietary engine that powers Xygeni’s platform-wide capabilities, including advanced prioritization and dynamic funnels described in its ASPM framework.

Instead of generating isolated recommendations, the MCP layer orchestrates full-context security execution.

Remediation Risk Built Into the Flow

Fixing vulnerabilities at scale introduces operational risk. Upgrading a dependency may resolve a CVE but break APIs or introduce incompatibilities.

Xygeni’s remediation risk engine, also exposed via MCP, evaluates upgrade impact before changes are merged. It analyzes compatibility implications and flags potential breaking changes, enabling safer automated remediation workflows.

This capability extends the SCA Autofix model, including bulk remediation and pull request generation with upgrade-risk detection.

The objective is clear: fix fast without destabilizing production.

Guardrails Enforced Before Code Moves Forward

Security policies are often enforced late, during merge approval or deployment. By that stage, remediation becomes costly.

Through MCP orchestration, guardrails can be validated immediately after scanning or remediation. If conditions such as “no critical reachable vulnerabilities” or “no active secrets” are not met, the AI assistant can surface that violation before the code advances.

This shifts enforcement left without adding friction.

Securing AI-Driven Development Without Adding Friction

Xygeni’s MCP server does not replace existing copilots. It augments them.

By exposing structured security tools to AI agents, Xygeni allows organizations to maintain:

- Policy-aware AI remediation

- Reachability-based prioritization

- Malware and dependency protection

- Secrets validation and auto-revocation

- CI/CD and supply chain visibility

All executed within the developer’s existing workflow.

With its MCP server, Xygeni turns AI coding assistants into governed security executors, embedding detection, remediation, risk evaluation, and policy enforcement directly inside the IDE.